Select a trace from the sidebar

to visualize its epistemological graph

Extensive utilities that facilitate research into agent methodologies and simplify the creation, deployment, and evaluation of scientific agents and environments.

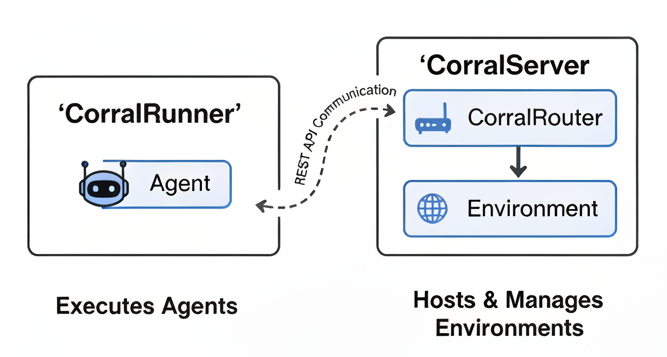

A microservice architecture ensuring flexibility, scalability, and robust isolation.

The "world" the agent interacts with. Defines the task space, available tools, and provides observable feedback. From chemistry labs to HPC clusters.

Modular entities for perception and decision-making. Built with LLMs using scaffolds like ReAct, ToolCalling, LLMPlanner, and Reflection.

Define problems for agents to solve with scoring functions for evaluation. Chain tasks into TaskGroups for complex multi-stage challenges.

Corral separates agents from environments via a client-server design with REST API communication.

Pre-built scientific environments spanning chemistry, physics, materials science, and more.

If you use Corral in your research, please consider citing:

@article{ríos-garcía2026ai,

title = {AI scientists produce results without reasoning scientifically},

author = {Martiño Ríos-García and Nawaf Alampara and Chandan Gupta and Indrajeet Mandal and Sajid Mannan and Ali Asghar Aghajani and N. M. Anoop Krishnan and Kevin Maik Jablonka},

year = {2026},

journal = {arXiv preprint arXiv: 2604.18805}

}

Start evaluating AI agents on scientific tasks in minutes.

Select a trace from the sidebar

to visualize its epistemological graph

Load a trace directory to begin

Select a folder with JSON trace files